About arc42

arc42, the template for documentation of software and system architecture.

Template Version 8.2 EN. (based upon AsciiDoc version), January 2023

Created, maintained and © by Dr. Peter Hruschka, Dr. Gernot Starke and contributors. See https://arc42.org.

1. Introduction and Goals

The Yovi Project is a software development initiative carried out within the Software Architecture course in the third year of the Software Computer Engineering degree at the University of Oviedo. The goal is to deliver a web-based version of the Y game for Micrati within a single academic semester, approximately four months long.

The game, named YOVI, is based on the classic board game Y. It is played on a triangular board, and two players compete to connect all three sides of the triangle. The first player to complete that connection wins the match.

The development team consists of the following members:

-

Juan Fernández López (uo296143)

-

Daniel López Fernández (uo289510)

-

Juan Losada Pérez (uo302850)

-

Pablo Pérez Saavedra (uo288816)

-

Mateo Rubio Zamarreño (uo300069)

1.1. Requirements Overview

-

System Architecture: The application is divided into three independent components:

-

Web Application: Built with TypeScript, providing the user interface for playing Y, managing accounts, and viewing rankings.

-

Users Service: Built with Node.js and Express, responsible for user registration, login, token validation, and player statistics.

-

Logic Module: Built with Rust, responsible for move validation, win-condition checking, and bot move selection through an HTTP API.

-

-

Communication: Components exchange data through JSON-based HTTP APIs. Board states are represented using the YEN notation.

-

Core Functionality: Implementation of the Y game with variable board sizes, playable in the web application and in CLI mode.

-

Deployment: The system must be fully deployed and publicly accessible via the web.

-

AI & Strategies: The computer opponent must support multiple AI strategies and difficulty levels selectable by the user.

-

User Management: Support for user registration, authentication, and persistent player statistics such as games played, wins, losses, and score.

-

Bot Interaction: A documented API allowing external bots to request the next move through the

playendpoint.

1.2. Quality Goals

| Quality Goal | Description |

|---|---|

Performance |

Ensure low latency and fast response times, particularly for AI move generation, to provide a fluid user experience. |

Testability |

The system must support high test coverage (unit, integration, e2e, and load tests) to guarantee reliability and facilitate maintenance. |

Usability |

The interface should be intuitive, aesthetic, and easy to navigate for any type of user. |

Maintainability |

The architecture must promote clean code and modularity, allowing for easy future updates, bug fixes, and documentation consistency. |

1.3. Stakeholders

| Role/Name | Contact | Expectations |

|---|---|---|

Micrati (Client) |

- A robust, integrated solution (Rust + TypeScript). |

|

Professors |

Celia Melendi: melendicelia@uniovi.es |

- Transparency in technical decisions through ADRs. |

Development Team |

Juan Fernández López - uo296143@uniovi.es Daniel López Fernández - uo289510@uniovi.es Juan Losada Pérez - uo302850@uniovi.es Pablo Pérez Saavedra - uo288816@uniovi.es Mateo Rubio Zamarreño - uo300069@uniovi.es |

- A modular architecture that enables parallel development. |

End Users (Players) |

- An attractive and fast web interface. |

|

Bot Developers |

- Exhaustive and clear API documentation (Swagger). |

2. Architecture Constraints

2.1. Technical Constraints

| Constraint | Background/Motivation | |

|---|---|---|

TC1 |

Frontend: React + TypeScript |

The user interface is implemented as a React single-page application with TypeScript for safer component development. |

TC2 |

Game engine: Rust |

The core engine for the Y game is implemented in Rust and exposed through an HTTP API and CLI entry point. |

TC3 |

User service: Node.js + Express |

User registration, login, token validation, and player statistics are handled by a Node.js and Express service. |

TC4 |

Docker |

All microservices must be containerized for consistent deployment. |

2.2. Organizational Constraints

| Constraint | Background/Motivation | |

|---|---|---|

OC1 |

Team Composition |

The project is developed by a team of five members within the academic timeframe. |

OC2 |

Deadlines |

All project deliverables must be submitted according to the academic calendar. |

2.3. Conventions

| Constraint | Background/Motivation | |

|---|---|---|

C1 |

Documentation Template: arc42 |

The documentation follows the arc42 structure to keep the architecture description consistent and standardized. |

C2 |

Documentation Language: English |

English is used throughout the documentation as the shared project language. |

C3 |

Development Workflow: Pull Requests |

All code changes must be reviewed through pull requests to support quality and team synchronization. |

C4 |

Collective Code Ownership |

All team members are responsible for the entire codebase, regardless of who authored a specific part. |

C5 |

Meeting Documentation |

All meetings, whether regular or extraordinary, must be documented in the repository wiki to preserve decisions. |

3. Context and Scope

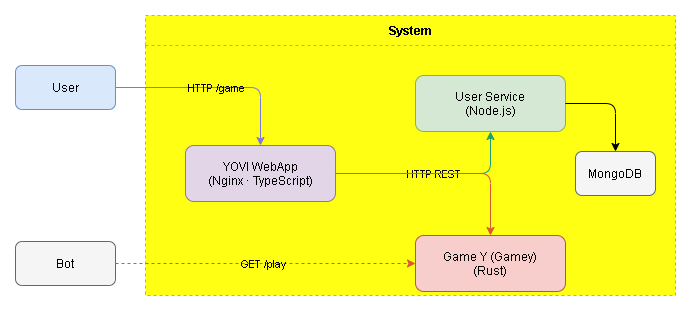

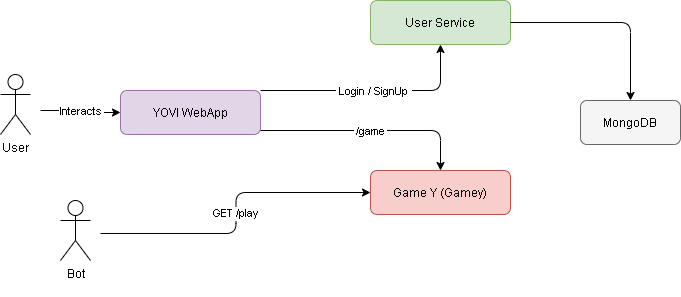

3.1. Business Context

-

YOVI is a web platform that allows human users and bots to play the Y game.

-

Human users interact with the system through the YOVI WebApp in a browser, using routes such as

/login,/register,/dashboard,/game, and/ranking. -

External bots interact with the game engine through the public

GET /playendpoint exposed by GameY. -

The YOVI WebApp communicates with the User Service for authentication, token validation, and user statistics, and with GameY to request the next move during a game.

-

GameY is implemented in Rust and is responsible for validating game states, checking win conditions, and selecting the next move using YEN notation.

-

MongoDB is used by the User Service to store persistent user data.

3.2. Technical Context

-

YOVI Web Application: the main user interface of the system. It is implemented as a React application with TypeScript and communicates with the backend services over HTTP.

-

User Service: internal service responsible for registration, login, token validation, and player statistics. It exposes a REST API and persists data in MongoDB.

-

GameY: a Rust service that implements the game logic for Y. It validates game states, checks for a winner, and suggests the next move. It exposes HTTP endpoints that exchange JSON data encoded with YEN notation.

-

MongoDB: database used by the User Service to store persistent user information.

4. Solution Strategy

The solution strategy for this project is based on three main pillars: a small service-oriented architecture, containerized deployment, and automated quality assurance. These decisions keep the system simple to deploy, easy to evolve, and consistent across development and test environments.

4.1. Key Technology Decisions

The selected technology stack balances performance, developer productivity, and code reliability.

| Technology | Rationale |

|---|---|

Rust & Cargo |

Power the GameY engine. Rust provides performance and memory safety for the game logic and bot selection API. |

Node.js & Express |

Powering the User Service. Its non-blocking I/O model is ideal for handling multiple concurrent requests for user management and scores. |

Vite & React |

Power the web application. Vite provides a fast development experience and React structures the UI as reusable components. |

MongoDB Atlas |

A cloud-based NoSQL database that offers high flexibility for our data models and eliminates the overhead of local database administration. |

Docker & Docker Compose |

Package and orchestrate the services so they can be started with a single command. |

GitHub Actions |

Automates build and test workflows for the repository. |

SonarCloud |

Provides static analysis and code-quality tracking, including coverage reporting. |

Prometheus & Grafana |

Provide metrics collection and visualization for the deployed services. |

4.2. Architectural Decisions

Several strategic decisions were made to streamline the architecture and reduce unnecessary complexity:

-

Point-to-Point Communication (No API Gateway): We explicitly decided not to implement an API Gateway. For the current scope of the project, a gateway would introduce additional latency and an unnecessary single point of failure. Instead, microservices communicate directly through a dedicated Docker network (

monitor-net). -

Container-First Strategy: Every component of the system is containerized. This allows us to package dependencies (like the Rust environment or Node runtimes) within the image, avoiding environment-related inconsistencies. We use GHCR (GitHub Container Registry) to host our images.

-

Internal Networking: We utilize a bridge network in Docker (

monitor-net) to isolate service traffic, ensuring that inter-service communication remains structured and controlled.

4.3. Quality and Development Strategy

To maintain high software quality and operational excellence, the team follows a structured development workflow:

-

Automated Quality Gates: We integrate SonarCloud within our CI/CD pipeline. This ensures that every contribution is analyzed for code coverage and quality standards. We aim for high test coverage to minimize regressions and ensure the reliability of the game logic and user management.

-

GitHub-Flow Methodology: Issues & Task Tracking: All features and bugs are documented as GitHub Issues to maintain a clear roadmap.

-

Pull Requests (PRs): No code is merged directly into the main branch. We use PRs to facilitate peer reviews, ensuring that at least one other team member validates the logic and style of the changes.

-

-

Team Coordination and Communication:

-

Synchronous Coordination: The team holds weekly meetings to align on objectives, discuss architectural challenges, and track progress against deadlines.

-

Asynchronous Communication: We use instant messaging platforms (WhatsApp) for day-to-day, rapid coordination and GitHub’s discussion tools for technical documentation and decision-making.

-

4.4. Constraints and Consistency

The strategy remains compliant with the project constraints:

-

Adherence to the provided skeleton while enhancing it with professional-grade monitoring and quality tools.

-

Strict use of English for all technical documentation and code comments.

-

Ensuring that the final solution is easily deployable via a single

docker-compose upcommand.

5. Building Block View

5.1. Whitebox Overall System

5.1.1. Overview diagram

This diagram shows how the user interacts with the system.

- Motivation

-

The Yovi system is a web application that lets users play the game of Y. The project architecture follows a clear separation between presentation, application logic, game logic, persistence, and observability. This separation improves maintainability, scalability, and testability.

- Contained Building Blocks

| Name | Responsibility |

|---|---|

Web Frontend |

Implemented with React and TypeScript. It provides the user interface for login, registration, the dashboard, the game view, and the ranking page. |

Users Service |

Implemented with Node.js and Express. It manages user registration, login, token validation, and player statistics, and persists data in MongoDB. |

Game Engine |

Implemented in Rust. It contains the core logic of the game of Y, including move validation, game state management, win-condition detection, and bot move selection. |

MongoDB |

Persistence layer used to store user accounts and player statistics. |

Monitoring Stack |

Prometheus and Grafana provide metrics collection and visualization for the deployed services. |

5.2. Level 2 - Container View

This level illustrates the structural decomposition of the system into the main runtime services and support containers used in the project.

5.2.1. White Box

- Motivation

-

The system uses a modular architecture to separate the user interface, the user service, the game engine, and the monitoring infrastructure.

- Contained Building Blocks

| Name | Responsibility |

|---|---|

WebApp |

Delivers the graphical user interface and communicates with the backend services to handle user actions and requests. Located in the /webapp directory. |

Users Service |

Manages user registration, login, token validation, and player statistics. Located in the /users directory. |

Game Engine |

Implements the core mechanics of the game of Y, handling move validation and bot move selection. Located in the /gamey directory. |

MongoDB |

Database used to store user data and player statistics. |

Prometheus |

Collects metrics from the running services. |

Grafana |

Visualizes service metrics and dashboards. |

6. Runtime View

The runtime view describes the dynamic behavior and interactions of the YOVI system’s building blocks. Interaction with YOVI is centered on game turns, external API access, Rust-based game logic, and data persistence.

Two primary use cases are architecturally significant because they show the coordination between the TypeScript web application, the users service, and the Rust game engine: human user interaction and automated bot interaction.

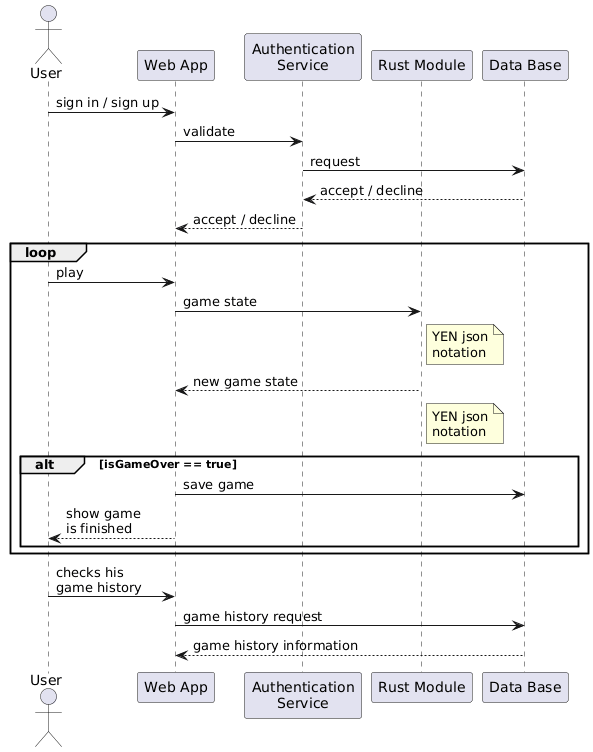

6.1. Human Player Game (Web UI)

This scenario shows how a registered user interacts with the application to play a match. The sequence includes authentication, game setup, game logic processing, and persistent storage of results.

In practice, the system supports three core user interactions: account registration and authentication, playing matches against an AI opponent, and reviewing personal game history and statistics.

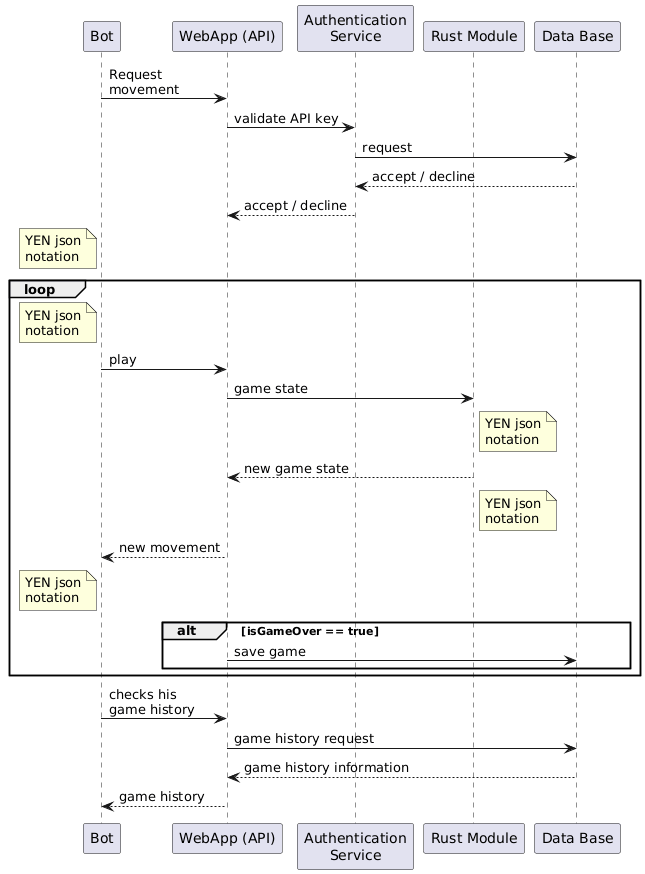

6.2. Bot Interaction (External API)

The second use case focuses on how an external bot interacts with the system through the public /play endpoint, which is a core requirement for interoperability.

-

API Request: The bot sends a GET request to the

/playendpoint with the current board position encoded in YEN notation. -

Request Parsing: The GameY service parses the request parameters and validates the provided board state.

-

Move Computation: The Rust game engine forwards the request to the selected bot strategy and computes the next move.

-

Game Update: The updated board state is applied to the internal game model and converted back to YEN.

-

API Response: The GameY service returns the resulting board state to the bot in YEN format.

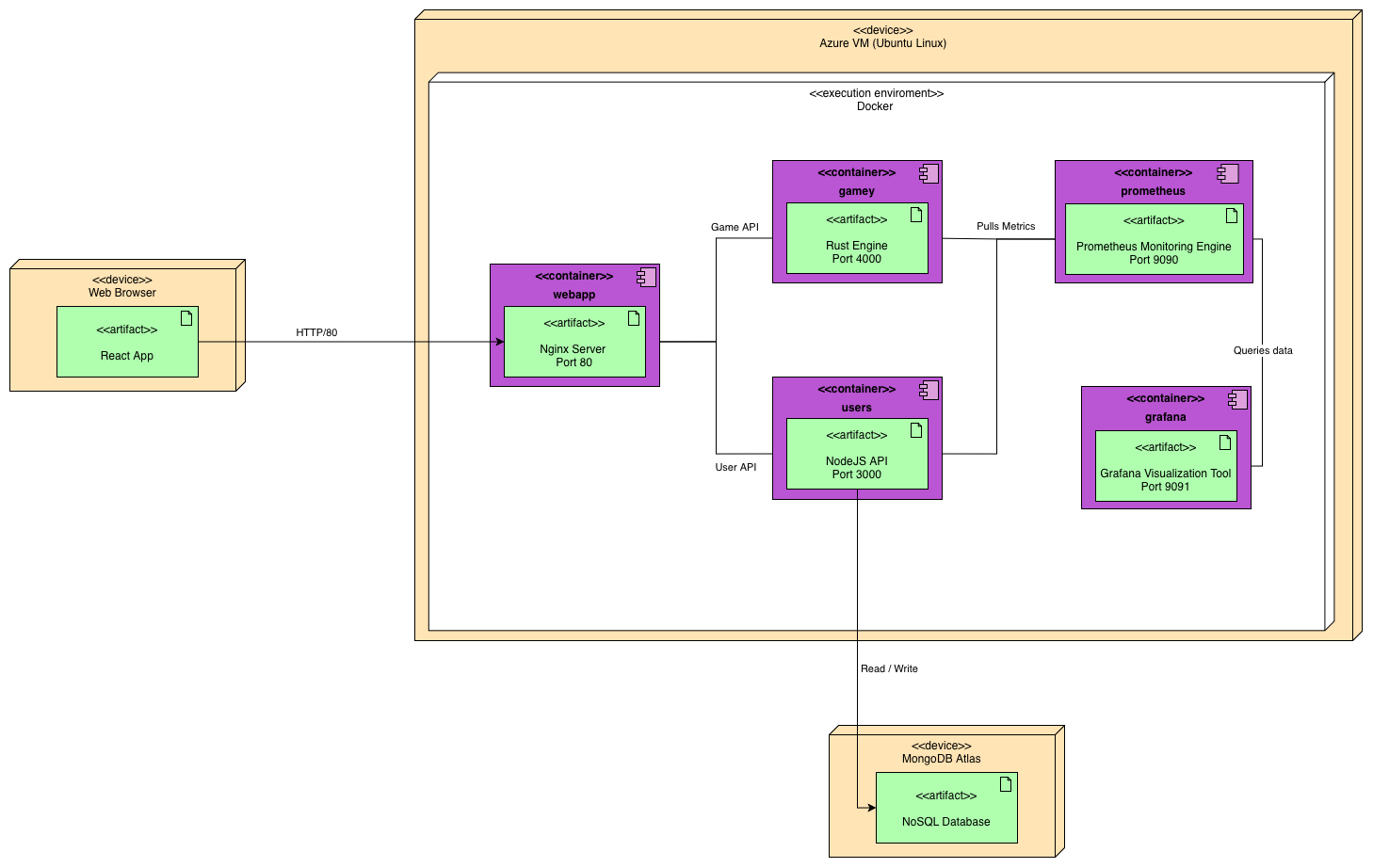

7. Deployment View

7.1. Infrastructure Level 1: Production Environment

- Motivation

-

The system is deployed using a hybrid cloud strategy. The application logic is hosted on a Virtual Machine in Microsoft Azure running Ubuntu Linux, providing a scalable and reliable environment for Docker containerization. Persistence is decoupled via MongoDB Atlas to ensure data durability. This setup allows us to manage the technological heterogeneity (Node.js and Rust) while maintaining high availability.

- Quality and/or Performance Features

-

-

Scalability: Azure allows for vertical scaling of the VM if the Rust engine or monitoring stack requires more resources.

-

Reliability: MongoDB Atlas provides a managed, replicated cluster that guarantees data persistence regardless of the VM state.

-

Portability: Using Docker on Ubuntu ensures that the entire stack can be replicated in any other cloud provider with minimal changes.

-

- Mapping of Building Blocks to Infrastructure

| Building Block | Infrastructure Element | Description |

|---|---|---|

WebApp |

Azure VM (Docker Container) |

Nginx server delivering the React bundle. |

Users Service |

Azure VM (Docker Container) |

Node.js API for user management and business logic. |

GameY Engine |

Azure VM (Docker Container) |

High-performance Rust engine for game logic. |

Monitoring |

Azure VM (Docker Container) |

Prometheus and Grafana for system observability. |

Database |

MongoDB Atlas (Cloud) |

Managed NoSQL storage for persistent data. |

7.2. Infrastructure Level 2: Application Host & Docker Network

7.2.1. Application Host (Ubuntu Linux on Azure)

Inside the application host, all services are orchestrated via Docker Compose within a shared bridge network called monitor-net. This allows internal communication and metrics scraping using container names as DNS.

Execution Environments & Artifacts:

-

Web Server Container: An execution environment hosting the

WebAppstatic files (HTML/JS/CSS) served by Nginx. -

Users Service Container: An execution environment running the

Node.jsruntime for the Users API. -

GameY Engine Container: A high-performance environment running the compiled

Rustbinary. -

Monitoring Engine (Prometheus): A containerized artifact that scrapes metrics from the other services via

monitor-net. -

Visualization Tool (Grafana): A containerized artifact that queries Prometheus to display real-time dashboards, exposed on port

9091.

7.2.2. Client Side (User Environment)

The user’s browser acts as the primary execution environment for the React application.

1. Bootstrap: The browser requests the static bundle from the Azure VM (port 80).

2. Execution: Once downloaded, the logic runs on the client’s device.

3. Communication: The client makes asynchronous REST calls to the Users Service (port 3000) and GameY (port 4000).

7.2.3. Persistence (MongoDB Atlas)

The Users Service connects to the MongoDB Atlas cluster via the MongoDB Wire Protocol. Access is secured through IP whitelisting (restricted to the Azure VM’s public IP) and TLS encryption, ensuring that sensitive user data is handled securely outside the main application host.

8. Cross-cutting Concepts

To better understand how the application works as a whole, it is useful to highlight a set of transversal concepts that influence different parts of the system. These ideas can be grouped into the following categories:

-

Domain Concepts

-

User Experience (UX)

-

Safety and security concepts

-

Architecture and design patterns

-

Development concepts

The following sections describe each of these categories in detail.

8.1. Domain Concepts

The YOVI platform domain is centered on representing and processing matches of the game of Y. To support both human players and automated agents, the system defines core concepts that structure how matches are created, played, validated, and interpreted.

-

User: Represents a human player interacting with the web application. A user can register, log in, play matches, and view personal statistics and ranking information.

-

Game State: A game models an active or completed match of Y. It includes board size, turn, players, and board layout. The board state is represented in YEN and processed by the Rust engine for validation and move computation.

-

YEN Notation: The platform uses YEN notation to encode game states in JSON-like format. This provides a shared contract between frontend integrations and the Rust game logic.

-

Bot Interaction: External bots interact with the public

/playendpoint exposed by GameY. They submit a board position and receive an updated position containing the selected move. -

Rule Evaluation and Move Suggestion (Rust Module): The Rust subsystem encapsulates the logic for determining whether a game has been won and for suggesting the next optimal move.

Together, these domain concepts define the core structure of the YOVI ecosystem.

8.2. User Experience (UX)

The user experience of the YOVI web application is focused on a simple gameplay flow:

-

Public access to registration and login routes.

-

Clear navigation to dashboard, game, and ranking views.

-

Immediate visual feedback during match setup and gameplay.

-

Access to user performance indicators through statistics and ranking pages.

8.3. Safety and security concepts

Several security mechanisms are already implemented to keep the platform controlled and reliable.

-

JWT-based Authentication and Authorization The users service issues JWTs on successful login and validates them in protected endpoints using middleware.

-

Credential Protection User passwords are hashed with bcrypt before being stored.

-

Input Validation and Sanitization User-related inputs are sanitized in the users service, and invalid requests are rejected with explicit HTTP status codes.

-

Controlled Error Responses Service responses return concise error messages and avoid exposing internal implementation details.

These measures lay the foundation for a secure environment in which both human players and automated agents can safely interact with the YOVI platform.

8.4. Architecture and Design Patterns

The YOVI platform architecture follows modular service separation across frontend, user management, and game logic.

-

Architecture

-

The web application, implemented in TypeScript with React, handles user interaction and client-side orchestration.

-

The users service, implemented in Node.js and Express, handles authentication and player statistics persistence.

-

The Rust GameY service acts as a specialized component for rule validation and move computation.

-

This separation allows each subsystem to evolve independently, maintaining a clear contract through JSON-based communication.

-

API-Based Design

Human users and bots interact through explicit HTTP endpoints (/login, /createuser, stats endpoints, and /play).

-

Communication via JSON (YEN notation) Game-state exchange uses YEN notation to provide a standardized, technology-independent representation.

These architectural principles provide a solid foundation for the YOVI platform, ensuring that the system remains flexible, scalable, and easily evolves as new game variants or features are introduced.

8.5. Development Concepts

The development process of the YOVI platform follows a set of practices intended to ensure code quality and smooth collaboration among team members.

-

Testing Strategy The project includes automated tests at different levels: unit and service tests in Node.js, component and E2E tests in the web application, and Rust tests for the game engine.

-

Continuous Integration and Delivery GitHub Actions workflows run builds, tests, coverage analysis, and SonarCloud quality checks. On release, container images are published and deployment is automated via SSH and Docker Compose.

9. Architecture Decisions

The architectural decisions documented in this section complement the solution strategy described in Section 4. While the solution strategy outlines the main technologies and constraints inherited from the project skeleton, this section focuses on the reasoning behind the most relevant structural and organizational choices made during the early stages of the project.

Since the system is still in an early development phase, the decisions captured here may evolve as the project grows. Their purpose is to provide clarity, traceability, and a shared understanding of the architectural direction.

9.1. Use of technologies provided with the project skeleton

9.1.1. Context

The project is based on a predefined skeleton that includes Rust for the game engine, Node.js/Express for the user service, and Vite/React for the web application.

9.1.2. Decision

Adopt the technologies already provided by the skeleton as the baseline for all components.

9.1.3. Rationale

-

Ensures consistency with the course requirements.

-

Reduces setup time and avoids unnecessary re-evaluation of the technology stack.

-

Allows the team to focus on architectural design rather than tool selection.

9.1.4. Consequences

-

Limits the freedom to choose alternative frameworks or languages.

-

Requires the team to adapt to the constraints imposed by the skeleton.

9.2. Adoption of MongoDB as the project’s database

9.2.1. Context

The project requires persistent storage for user login information and game scores.

9.2.2. Decision

Use MongoDB as the primary database for the system.

9.2.3. Rationale

-

Easy to use and integrate with Node.js.

-

Widely used among peers, facilitating knowledge sharing.

-

Flexible schema suitable for early-stage academic projects.

9.2.4. Consequences

-

Requires maintaining a separate database service in the deployment environment.

-

May require stronger schema-validation mechanisms if the project grows in complexity.

9.3. Service-oriented separation of responsibilities

9.3.1. Context

The system consists of three components with distinct roles: frontend, user service, and game engine.

9.3.2. Decision

Maintain a clear separation of concerns by treating each component as an independent service with its own responsibilities and lifecycle.

9.3.3. Rationale

-

Improves modularity and maintainability.

-

Allows each component to evolve independently.

-

Aligns with the educational goal of exposing students to distributed architectures.

9.3.4. Consequences

-

Introduces the need for well-defined communication interfaces.

-

Increases operational complexity compared to a monolithic approach.

10. Quality Requirements

10.1. Quality Tree

10.2. Quality Scenarios

| Scenario ID | Scenario Name | Source | Stimulus | Environment | Artifact | Response | Priority |

|---|---|---|---|---|---|---|---|

SC-01 |

Performance |

User |

Opens or refreshes the page |

Normal operation |

WebApp, Users Service, GameY |

The system responds quickly, rendering the requested view and loading required data without noticeable delay for normal usage. |

High |

SC-02 |

Security |

User, cheater |

Tries to hack the game to claim a victory without playing |

Normal Operation |

Backend |

The server checks teh client’s data and denies the request. |

High |

SC-03 |

Usability |

New user |

Uses the application for the first time |

Normal operation |

WebApp |

The interface provides clear navigation, readable labels, and immediate visual feedback so users can register, start a game, and understand results without external help. |

Medium |

SC-04 |

Maintainability |

Developer |

A bug fix or feature change is requested |

Maintenance phase |

WebApp, Users Service, GameY |

The modular structure and test suite allow developers to isolate the affected component, implement the change, and validate it with limited impact on other services. |

High |

11. Risks and Technical Debt

This section describes the technical risks and the intentional (or unintentional) technical debt identified during the development of the YOVI system.

11.1. Risks

| Risk | Description | Priority |

|---|---|---|

Rust/TS Integration |

Potential communication failures or performance bottlenecks in the YEN notation exchange between the TypeScript Backend and the Rust Module. |

High |

Security and API Protection |

Risks of unauthorized access to the game history or the bot-exclusive API if API keys and authentication mechanisms are not properly secured. |

High |

Performance and Scalability |

The system’s ability to handle multiple simultaneous games could be compromised if the Rust calculation engine is not optimized or if the API bridge blocks threads. |

High |

Infrastructure Dependency (Azure) |

Total reliance on Microsoft Azure for the production environment. Any service outage directly impacts the availability of the game for both users and bots. |

Medium |

Lack of prior collaboration within the team |

Since the team has not worked together before, there is a risk of inefficiencies in coordination. Establishing clear communication channels and regularly updating each other on progress will mitigate this issue. |

Medium |

Lack of Integration Testing |

Insufficient testing of the full cycle (Frontend → Backend → Rust → DB) could lead to undetected bugs in the game state transitions. |

Medium |

11.2. Technical Debt

| Technical Debt | Description |

|---|---|

Learning Curve: Rust Expertise |

The development team has limited prior experience with Rust. This may result in suboptimal memory management or non-idiomatic code that will require future refactoring. |

Incomplete Documentation |

As the project is still in an active development phase, some sections of the arc42 documentation and code comments are not yet fully completed or updated. |

Code Refactoring |

Early architectural decisions focused on achieving a functional prototype (MVP) might need to be refactored to improve maintainability and legibility. |

12. Glossary

12.1. Architectural Concepts

| Term | Definition |

|---|---|

API (Application Programming Interface) |

A contract that defines how different software components communicate with each other. In YOVI, APIs enable interaction between the Web Application, Users Service, Game Engine, and external bots. |

Microservices |

Small, independent services responsible for specific business capabilities. In YOVI, the system is decomposed into Web Application, Users Service, and Game Engine, each deployed as a separate Docker container. |

Service-Oriented Architecture (SOA) |

An architectural style that organizes functionality as a collection of loosely coupled, autonomous services. YOVI follows this pattern to maintain clear separation between presentation, user management, and game logic. |

Separation of Concerns |

A design principle that divides the system into components, each responsible for a specific aspect of functionality. YOVI separates frontend, backend, database, and game logic to improve maintainability and testability. |

BFF (Backend for Frontend) |

A backend service designed specifically to serve the needs of a particular frontend application. The Users Service and Web Application coordination follow this pattern for the React Web Application. |

12.2. Design and Integration Patterns

| Term | Definition |

|---|---|

Stateless Computation |

Processing that does not retain state between requests. The Game Engine (Rust module) follows this pattern—it receives a game state in YEN notation and returns a move suggestion without maintaining session data. |

Orchestration |

Coordination of multiple services to achieve a common goal. YOVI uses Docker Compose to orchestrate Web Application, Users Service, Game Engine, and Monitoring services within the Azure VM. |

Request/Response Pattern |

A communication model where a client sends a request and waits for a response. YOVI uses this pattern for all interactions between the Web Application and backend services via JSON over HTTP. |

CI/CD (Continuous Integration/Continuous Deployment) |

An automated process where code changes are continuously tested and deployed to production. YOVI implements CI/CD workflows to ensure code quality and rapid feedback to the development team. |

12.3. Quality and Non-Functional Attributes

| Term | Definition |

|---|---|

Quality Goals |

Non-functional requirements that define desired system attributes. YOVI prioritizes Performance (low latency), Testability (high test coverage), Usability (intuitive interface), and Maintainability (clean, modular code). |

Quality Scenarios |

Concrete descriptions of how the system responds to stimuli with respect to quality attributes. Example: "When a user opens the page, the system must display the previously saved state in under 2 seconds" (SC-01: Performance). |

Scalability |

The system’s ability to handle increased load by adding resources or optimizing algorithms. YOVI’s architecture allows vertical scaling on Azure and modular design enables independent scaling of each service. |

Testability |

The ease with which a system can be tested at multiple levels. YOVI achieves testability through clear separation of concerns, enabling unit, integration, and end-to-end tests of each module independently. |

Maintainability |

The ease with which the system can be modified, debugged, and enhanced. YOVI’s modular architecture, clear API contracts, and comprehensive documentation support long-term maintainability. |

12.4. Security and Operations

| Term | Definition |

|---|---|

Authentication |

The process of verifying that a user is who they claim to be. YOVI requires users to authenticate before accessing game features and maintains secure credential storage for registered users. |

Authorization |

The process of determining whether an authenticated user has permission to access specific resources or perform specific actions. YOVI protects game features and the bot API through authorization checks. |

API Key |

A unique identifier that grants access to protected APIs. YOVI uses API keys to control access to the |

Deployment View |

A description of how software components are distributed across physical infrastructure. YOVI is deployed on Microsoft Azure using Docker containers, with MongoDB Atlas for persistence and Grafana for monitoring. |

Observability |

The ability to understand system behavior and diagnose issues through logs, metrics, and traces. YOVI uses Prometheus for metrics collection and Grafana for visualization in production. |

Technical Debt |

Work that reduces code quality or increases future maintenance costs, often incurred to meet short-term deadlines. YOVI acknowledges technical debt in Rust expertise and incomplete documentation that may require future refactoring. |

12.5. Domain and Technical Terms

| Term | Definition |

|---|---|

YEN Notation |

A standardized JSON-based format for encoding game states and moves in YOVI. Both the Web Application and Rust Game Engine use YEN notation to ensure consistent interpretation of board positions and valid moves across system boundaries. |

Game State |

The complete representation of a game at any point in time, including the board configuration, current player, move history, and game status (in-progress, won, drawn). Game states are exchanged in YEN notation. |

Move Validation |

The process of verifying that a proposed move is legal according to the game rules. The Rust Game Engine is responsible for move validation to ensure only valid game transitions occur. |

Win Condition Detection |

The logic that determines when a player has achieved victory. In the Y game, this occurs when a player connects all three sides of the triangle. The Rust module encapsulates this critical logic. |

Container |

A lightweight, standalone execution environment that packages an application and its dependencies. YOVI uses Docker containers to deploy the Web Application, Users Service, Game Engine, and Monitoring Stack. |

Architecture Decision Records (ADR) |

A structured document capturing important architectural decisions, including context, decision rationale, and consequences. YOVI uses ADRs to maintain transparency and traceability of design choices. |

Cross-Cutting Concepts |

Concerns that span multiple components or layers of the architecture, rather than being isolated to a single module. In YOVI, examples include security, testing strategy, and the communication protocol (YEN notation). |

13. Load Tests

13.1. The Cases

Our load tests were focused on the playable aspect of the application, mainly to test the capabilities of the bots the users will play against.

We devised three main scenarios:

-

The user plays against the random bot on a small gameboard.

-

The user plays against the Heuristic bot on a default sized gameboard.

-

The user plays against the Montecarlo bot on a max sized gameboard.

This three scenarios were simulated using Scala files, generated using the Gatling tool, recording the three different scenarios and then simulating them with the same loads.

In addition, all three scenarios were tested locally using the Docker deployment tool, and then tested again using a remote deployment using the Microsoft Azure service, to compare the different results in a local enviroment against a more demanding and realistic context.

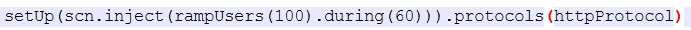

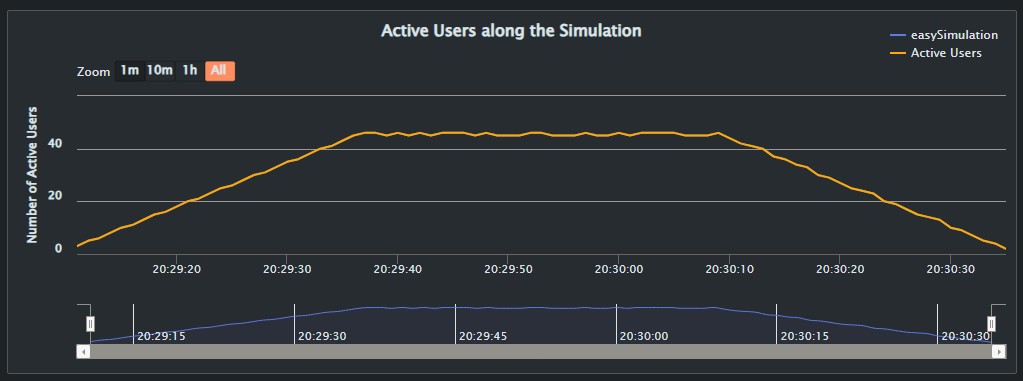

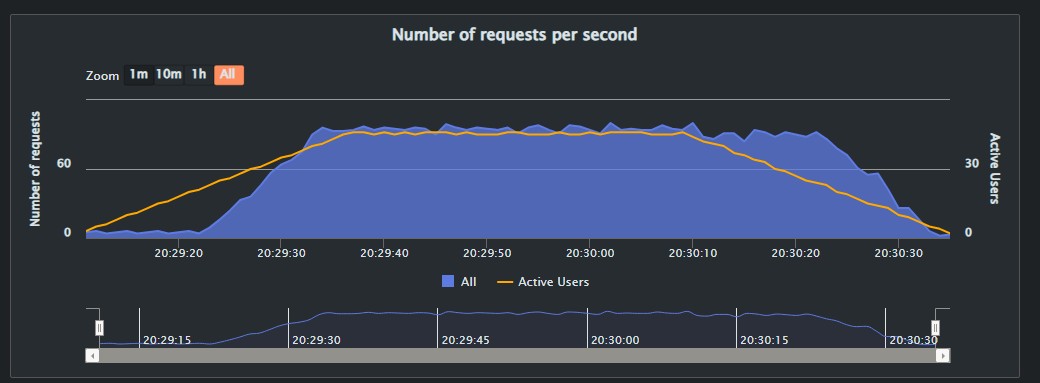

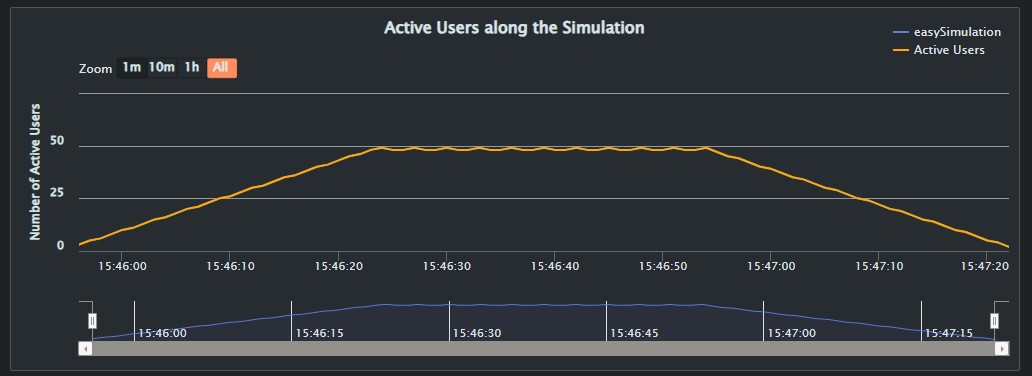

For better consistency between the tests, the load was configured to ramp up up 100 users in a span of 60 seconds.

13.2. Docker Tests

13.2.1. Context

This first batch of tests were carried out with a locally deployed application using Docker. The results are shown and explained bellow.

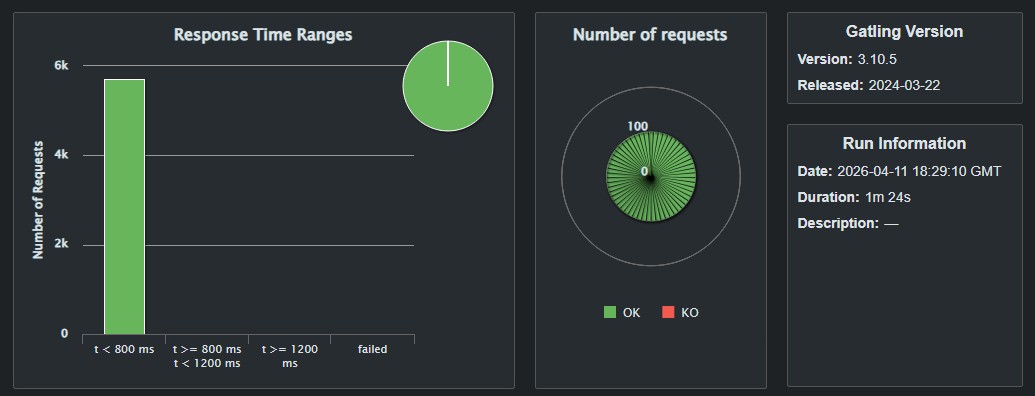

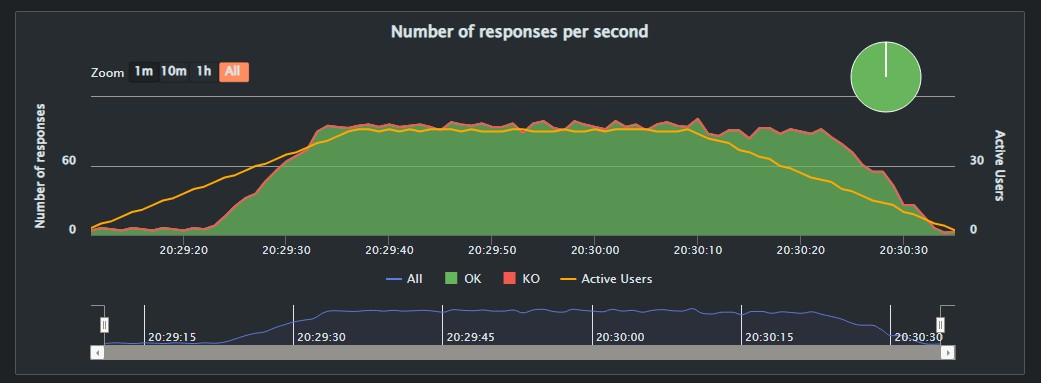

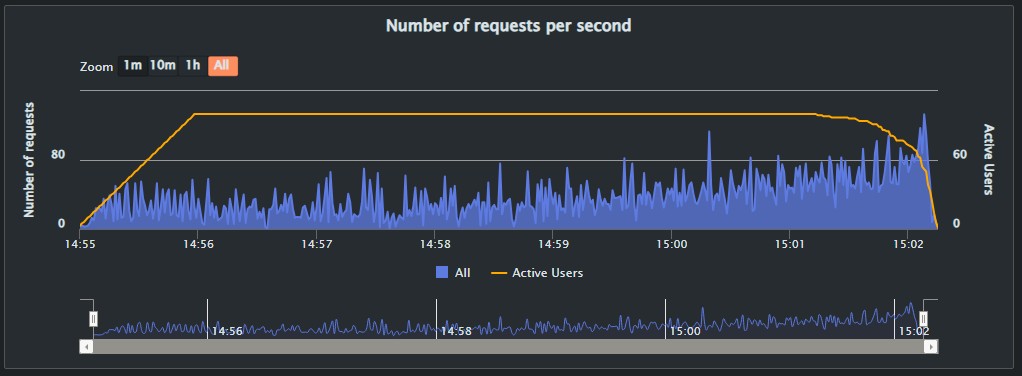

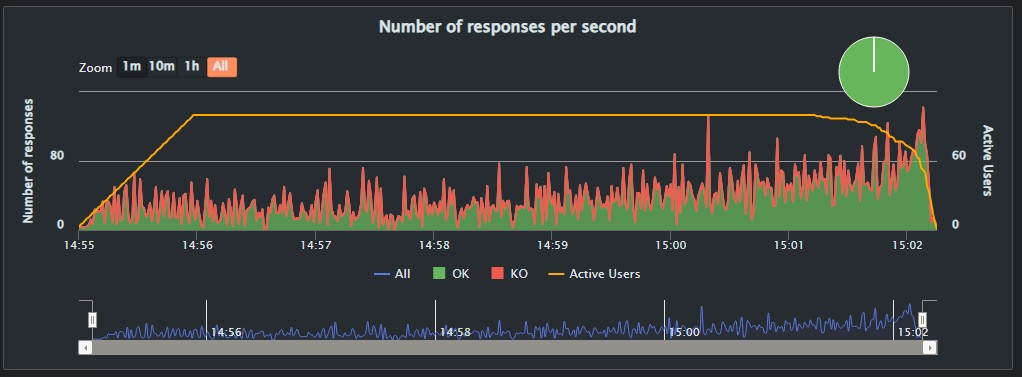

Random Algorithm (easySimulation)

The simulation named "easySimulation" was pretty fast, and resulted in a perfect score with no "KOs". This may obey several different factors such as the size of the gameboard being the smallest size a user can select before begining a game, and the nature of the bot itself.

The bot used in this simulation is the most simple of all, it’s behaviour basically boils down to choose a random unselected square to make its move. This simpler strategy when compared to the other two tested algorithms results in the bot being able to attend all the requests without an issue.

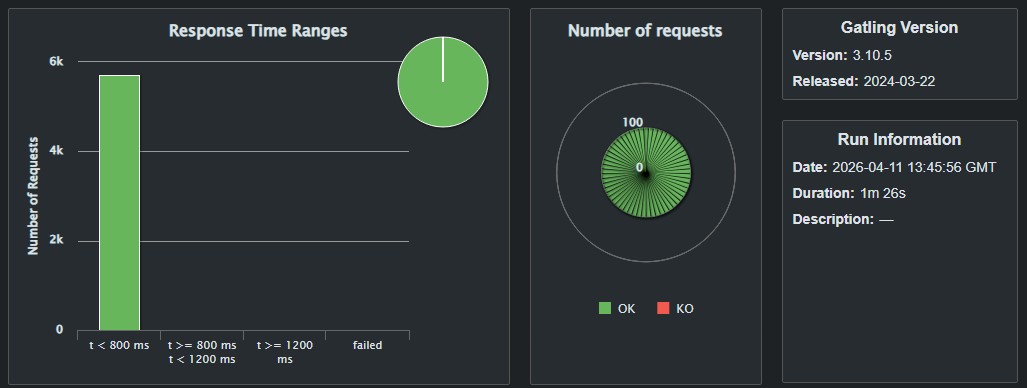

Overall, this simulation responded to a total of 5700 requests in an average of 8ms per request.

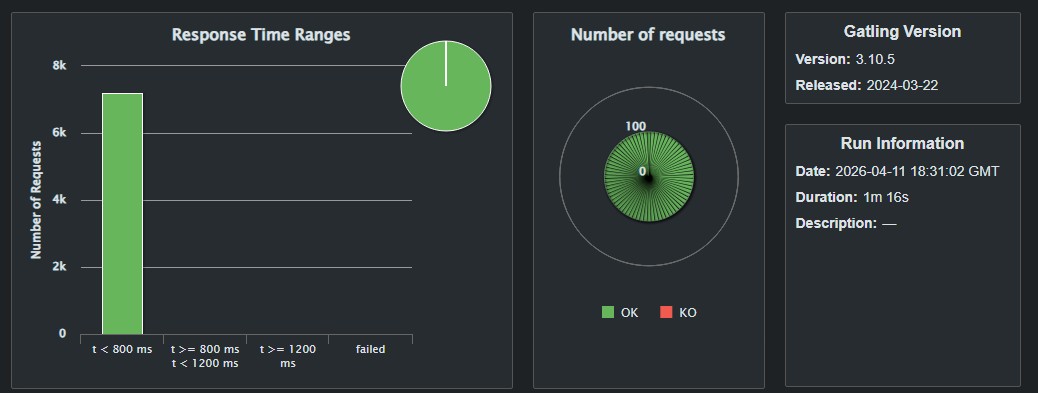

13.2.2. Heuristic Algorithm (heuristicSimulation)

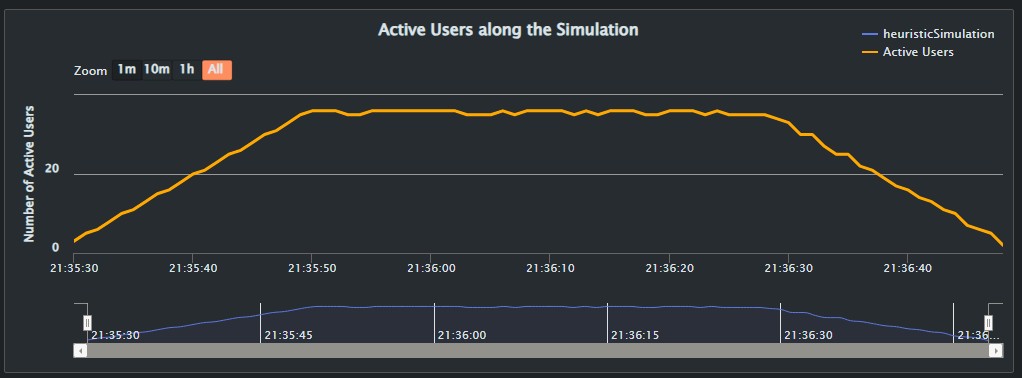

This simulation was even faster than the last, finishing in 1m and 16 seconds, 8 seconds faster than it’s predecesor.

In addition, it also managed to process 7200 request without a single fail, scoring the best results so far. The average response time was 4ms for all 7200 requests. As before, the number of active users grew slowly before balancing between 31 and 32 active users for the best part of the test, and progresivelly lowering towards the end of the test.

13.2.3. Montecarlo Algorithm (montecarloSimulation)

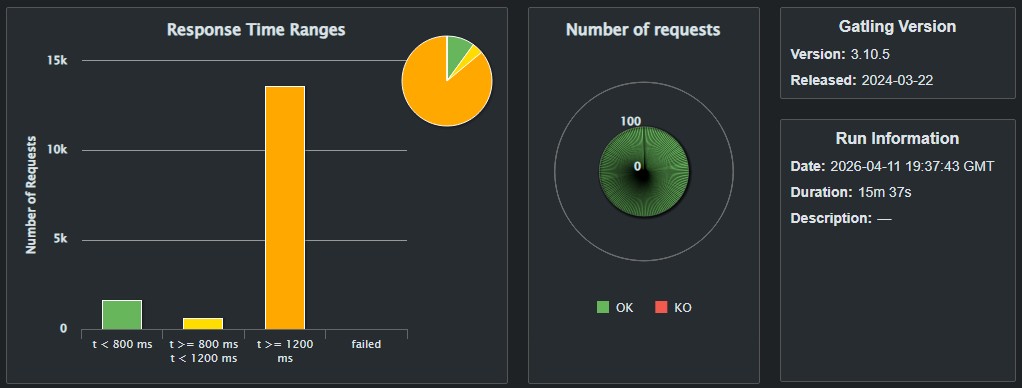

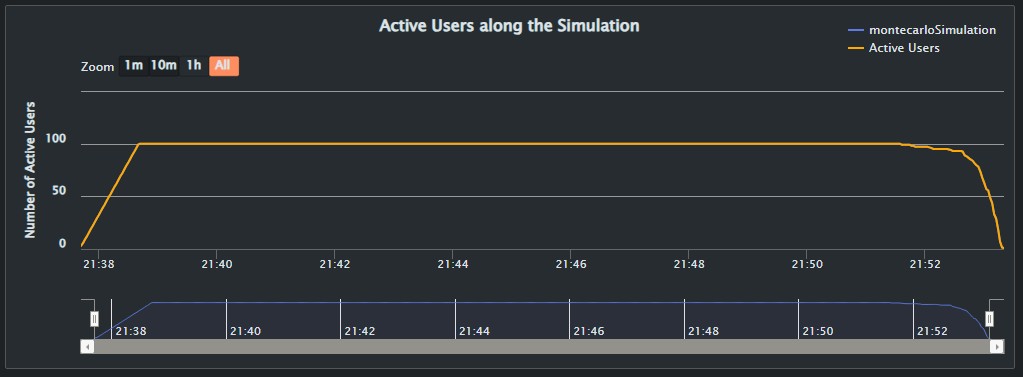

The Montecarlo bot is the most advanced of the three chosen algorithms. Before making a move, the bot evaluates each possible move by simulating multiple random plays for that position, finally choosing the move with the highest succeed rate across all simulations. Our bot is configured to run 100 simulations per move, meaning that it takes a considerable amount of time to make it’s move when compared to the other two algorithms. This complexity can be clearly seen in the result of the test, this simulation took 7 min and 15 sec to complete with no failed requests.

However, this simulation is also the first to show a clear bottle neck, showing a very unneven distribution of response times. In a total of 15800 requests, 65% (about 10320 requests) took more than 1200 ms to be processed, 11% (1708 requests) took between 800 ms and 1200 ms and finally 24% (3772 requests) took less than 800 ms. The average response time was 2295 ms for al 15800 requests. We must also take in account the fact that this game was played with the biggest gameboard available (16x16x16), meaning that by default, the match takes a longer time to end, and considering the bottle neck created by the request, is safe to assume that most of them took even longer due to the time spent waiting for the bot to make a move.

13.3. Azure Tests

13.3.1. Context

This next batch of tests were executed using the Microsoft Azure service to deploy the application remotely. The goal of this second batch is to compare the results of a local deployment, which usually have a better performance, with the results in a more realistic scenario, analysing the differences between them.

Random Algorithm (easySimulation)

The random algorithm once again managed to process 5700 requests, albeit in a slightly longer time of 1 min and 26 seconds compared to the 1 min and 24 seconds of the local deployment. Again, no fails were detected for this execution.

Another interesting aspect about this execution is the fact that it also took longer to reach a stable range of active users, managing 48 or 49 simultaneous users for aproximately a third of the execution, before steadly lowering until the end.

The average response time was 39ms for all 5700 requests.

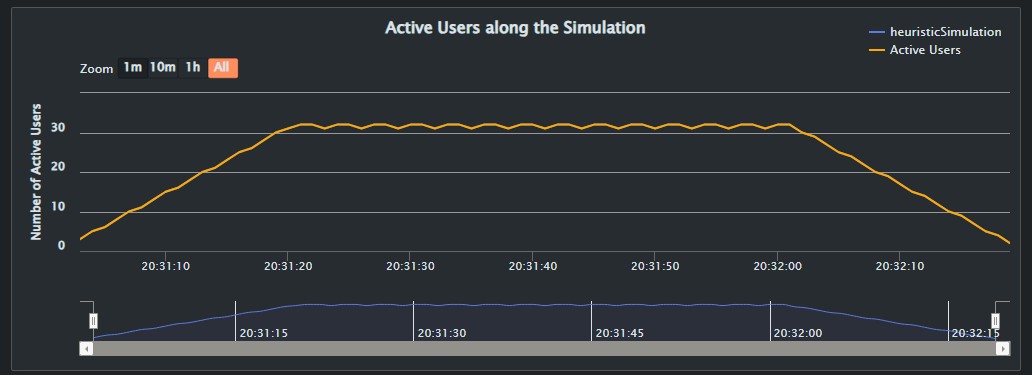

Heuristic Algorithm (heuristic Simulation)

The heuristic simulation was faster than the random algorithm once again, but still marginally slower than the first run with the local deployment, with 1m 18sec of total run time. No fails were detected in for this test.

The average response time was 36 ms for 7200 requests. It also had a higher number of active users during the simulation.

Still, the algorithm managed to process all requests under 800 ms, with no failures or timeouts, and with no bottle neck.

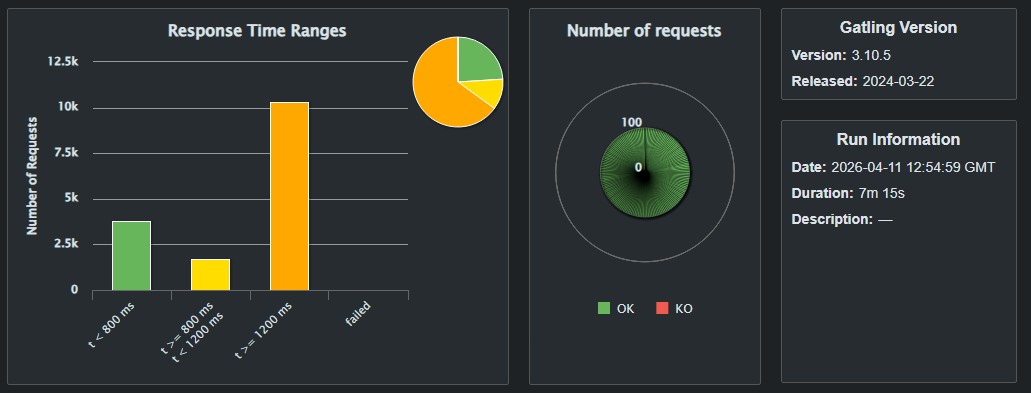

Montecarlo Algorithm (montecarloSimulation)

This tests took by far the longest time to complete, with 15 min and 37 seconds. The already present bottle neck detected in the local deployment was aggravated even more, with 86% (13584 requests) waiting for more than 1200 ms for a response, with only 4% (603 requests) recieving a response with a wait time between 800ms and 1200ms, 10%(1613 requests) got a response in less than 800ms of wait time.

Interestingly, as a result of this bottle neck in both the Docker and Azure deployments, the number of active users during most of the test was the total 100 users configured, meaning that the bot wasn’t able to finish a single game before all users had been activated. Furthermore, this algorithm shows a faster and steeper increase in the number of users before reaching its peak compared to the previous two, this is due to no users being able to finish a game in less than a minute, so by the time the first user finishes the game, all users have already being activated. The same is true for the decline in active users at the end of the simulation, showing a much steeper drop than other runs.

In total, the average response time was 5424 ms for all 15800 requests.

If we compare the results of both the Docker Simulation with the present Azure Simulation we can find certain key differences that show the effect of the load in the Azure deployment. First, the Active Users in the Azure deployment increase and decrease more rapidly, meaning an stepper increase and decrease when compared to the previous results. Looking now at the requests and responses, the results also shown that for the same load, the time increase in the Azure deployment was also bigger, specially on the last two minutes of execution. The overall run was also a bit more erratic, so to speak, we can find lots of sudden peaks that, albeit also present in the Docker deployment, they weren’t as many nor as high. Overall, the first execution in Docker yielded more homogeneus results than the ones we see with the Azure deployment.

13.4. Conclusions

The main issue detected during this tests is how the Montecarlo Algorithm creates a bottle neck when server with numerous requests, efforts to try and optimize the algorithm could drastically improve it’s performance. Another possible solution would be to lower the number of simulations runned by the bot per move from 100 to a lower value, so that it would be less computationally expensive to run in high load situations as seen during the tests. Other noteworthy differences between the simulations are the difference in times between each other and between the Docker and the Azure deployments. In both deployments, the heuristic algorithm was the fastest of the bunch, managing a big number of requests without a bottle neck or failures. It was also quite surprising how it was generally faster than the simpler random algorithm. All Azure tests took longer to finish than their Docker counterparts, albeit most by very small margins of merely seconds, with a great difference of almost 8 minutes of difference for the Montecarlo Algorithm.

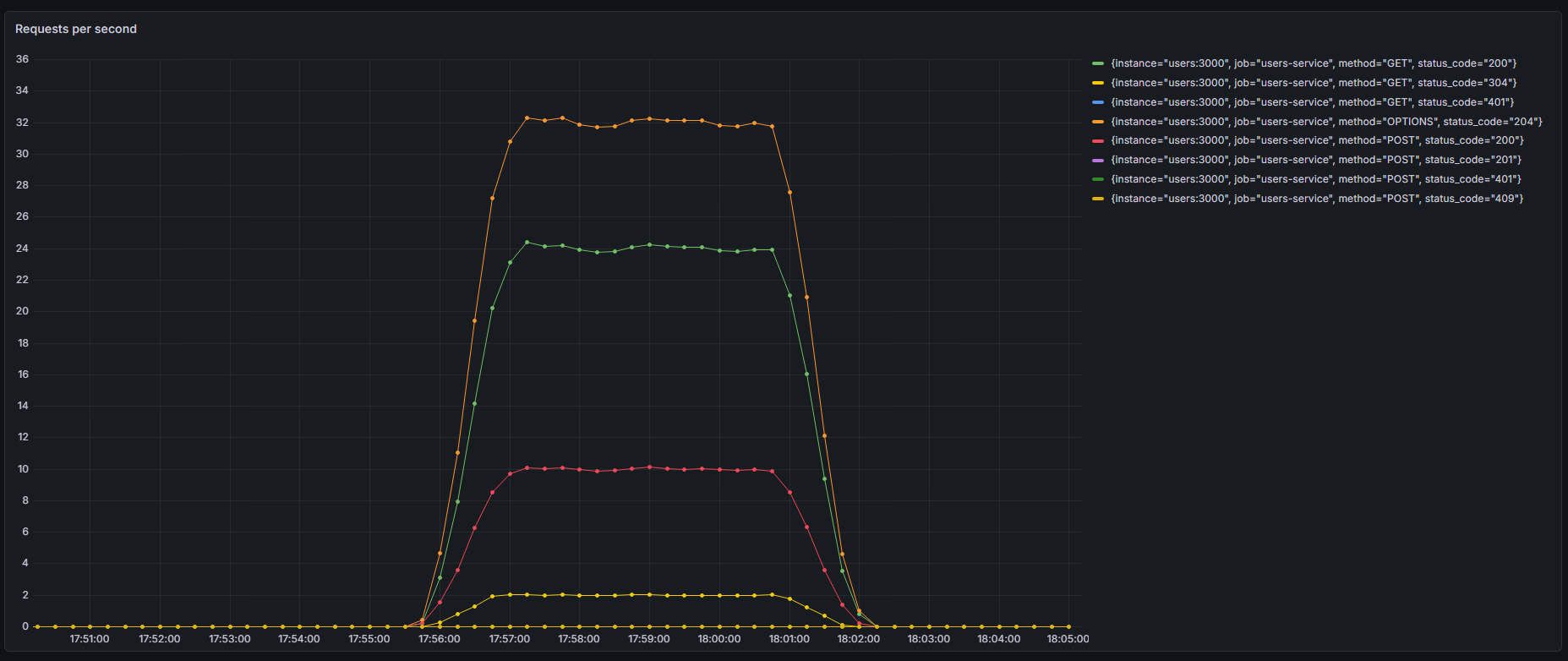

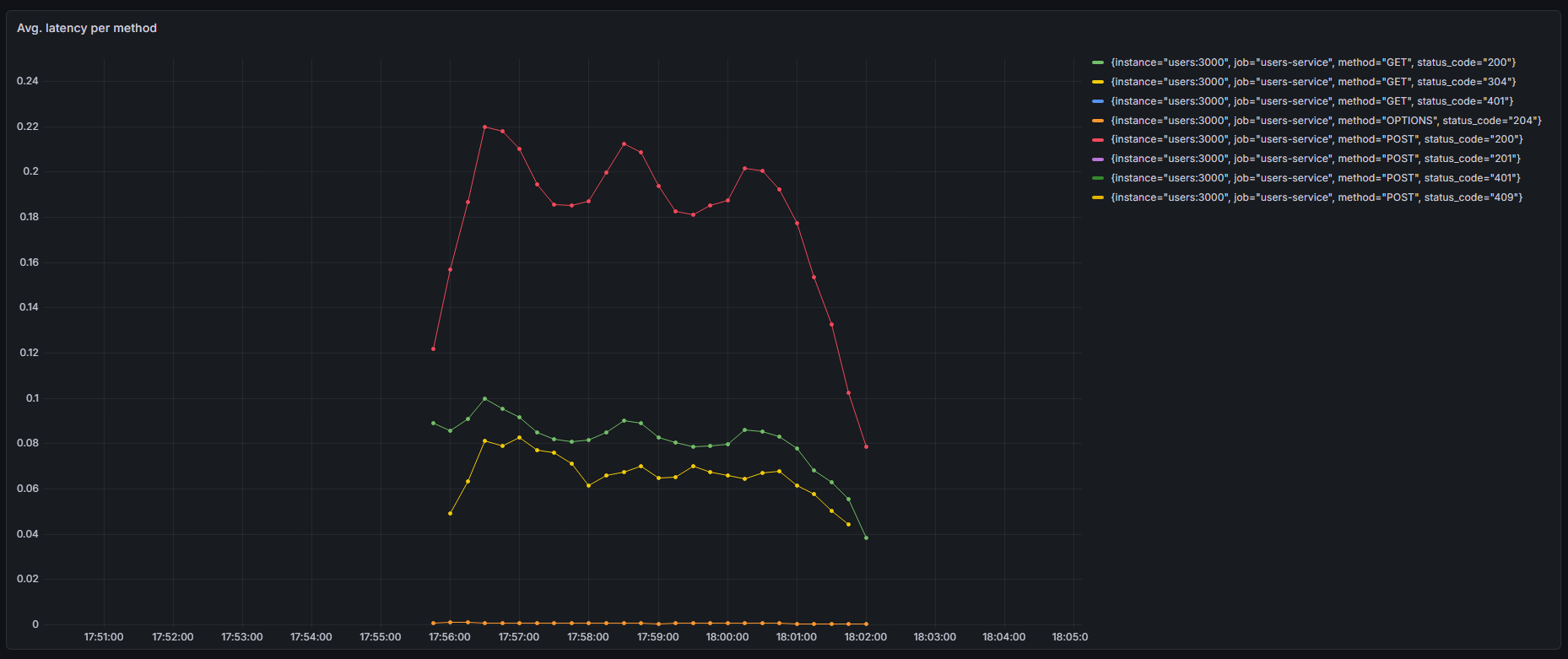

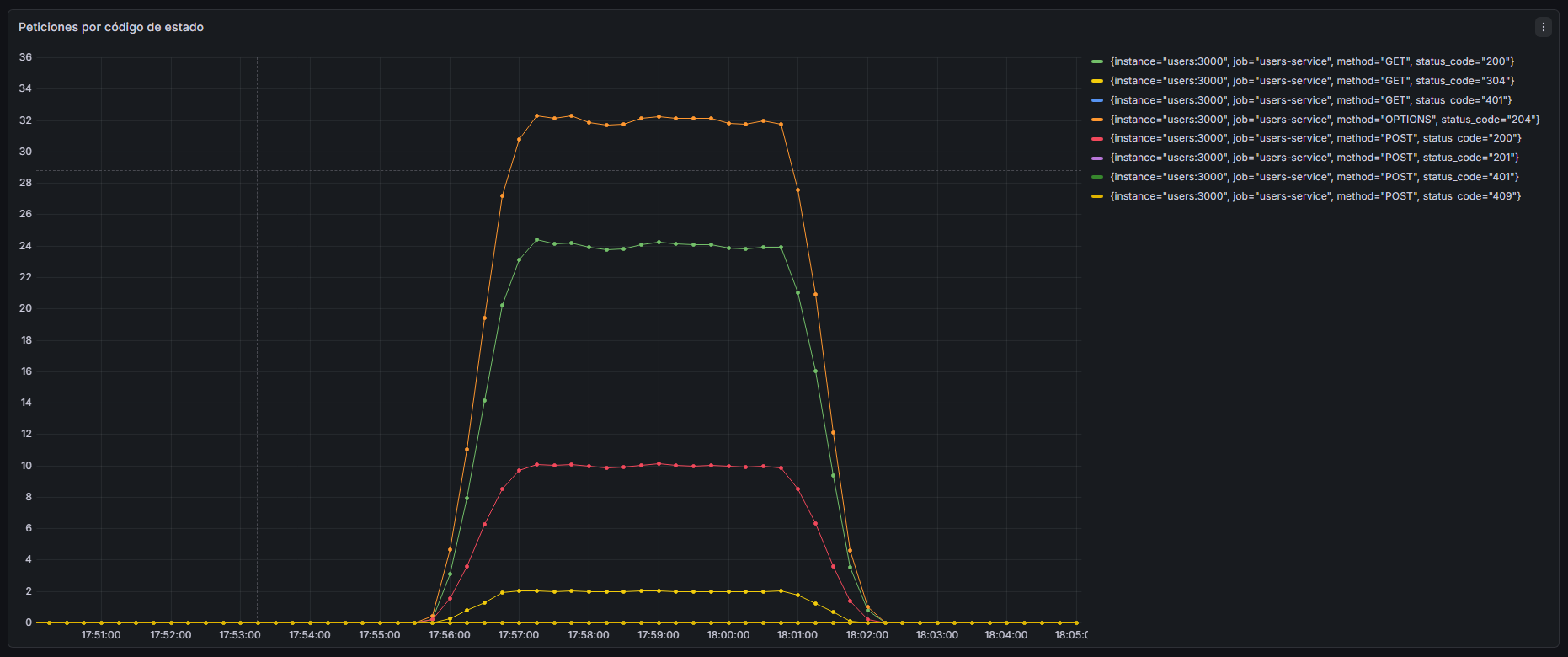

14. Monitoring

App monitoring is the process in which developers, through the use of certain software and tools, analise the performance of the application to ensure it runs as smoothly as possible.

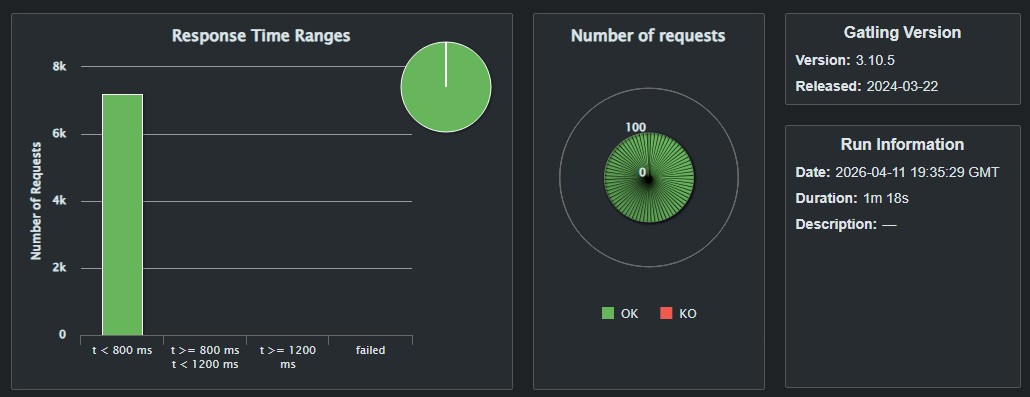

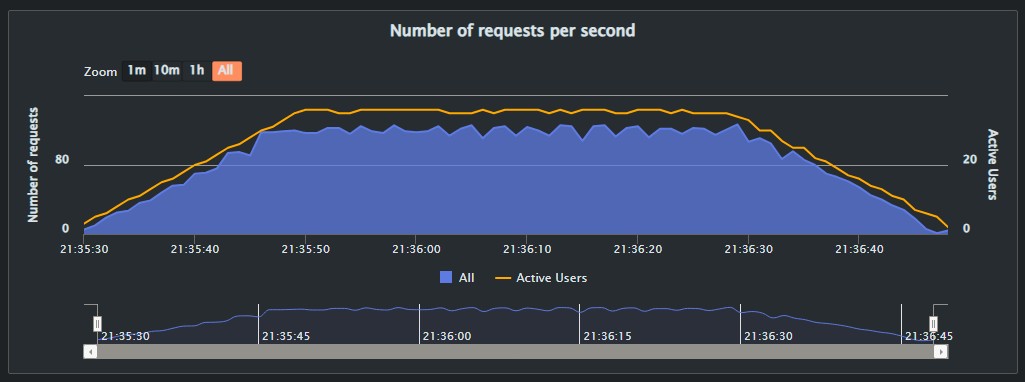

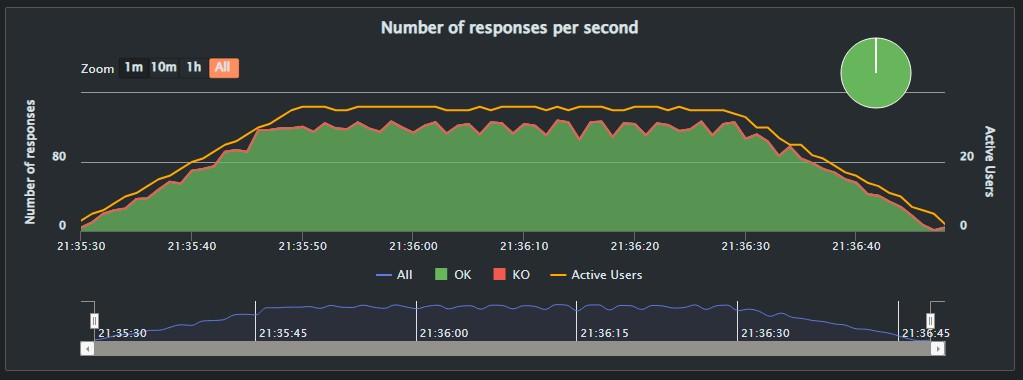

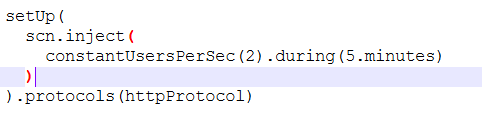

In our case we focused our monitoring tasks mainly in the user service, because of this, and in order to obtain the best results we can, we need a steady flux of users for a period of time to test the capabilities of the service. In order to accomplish this, a new simulation was recorded using the Gatling tool. This simulation focused on testing areas of the app that send requests to the user service like logins, logouts and queries. This simulation was later configured to provide the app with a maximum of 2 constant users for 5 minutes.

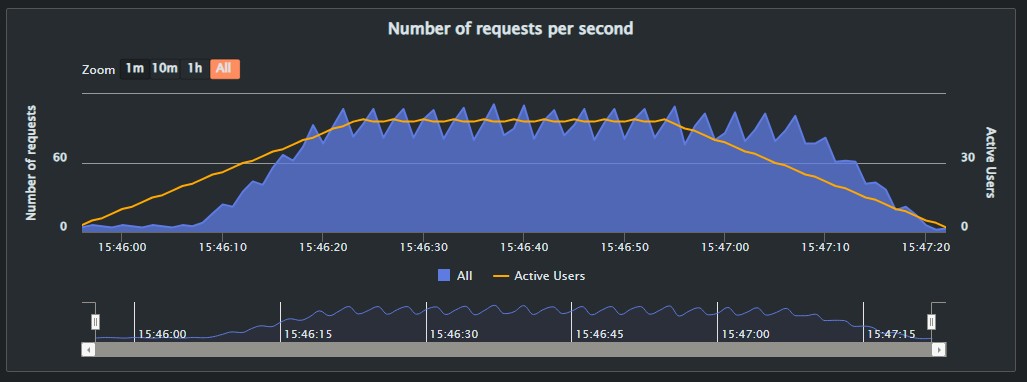

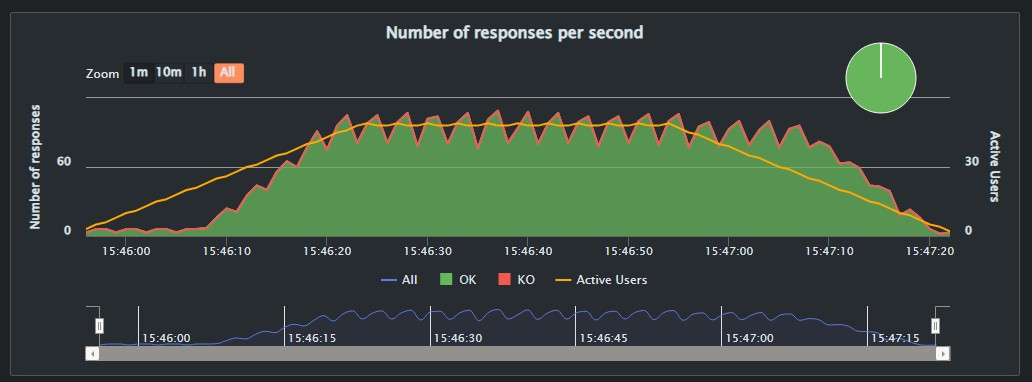

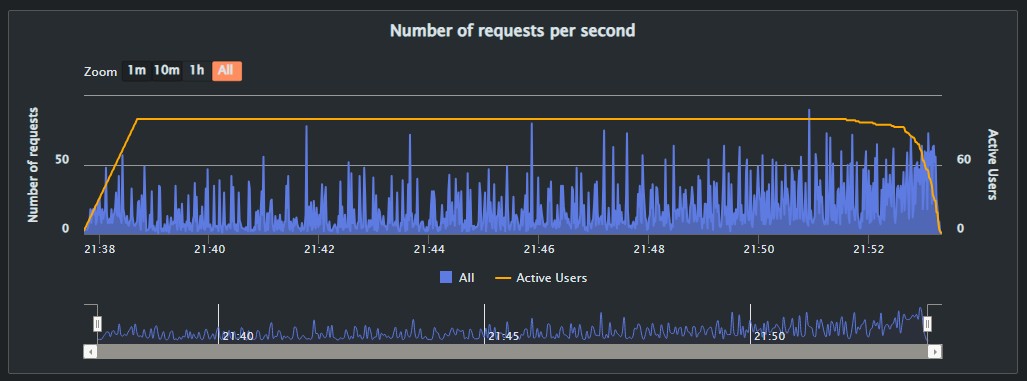

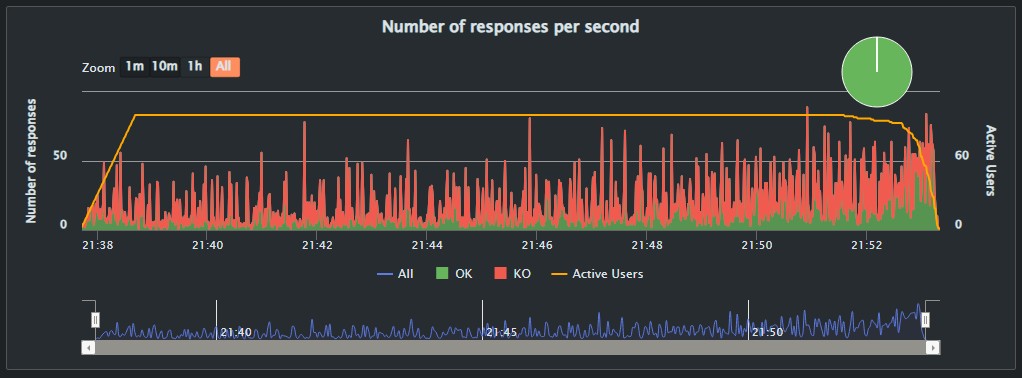

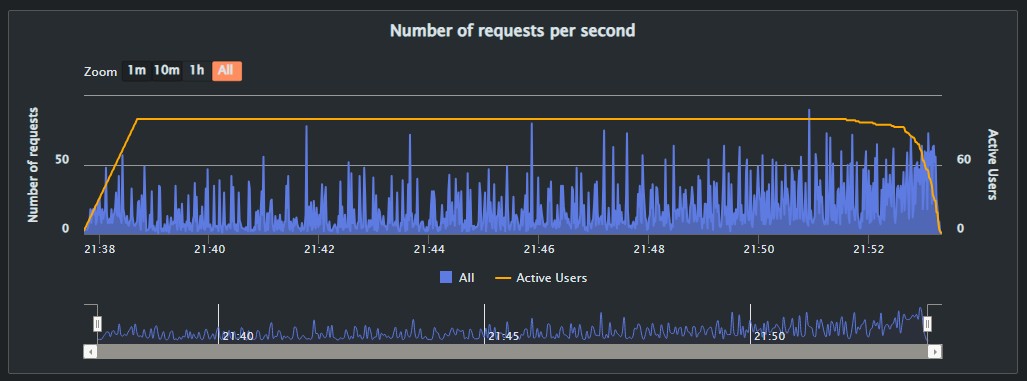

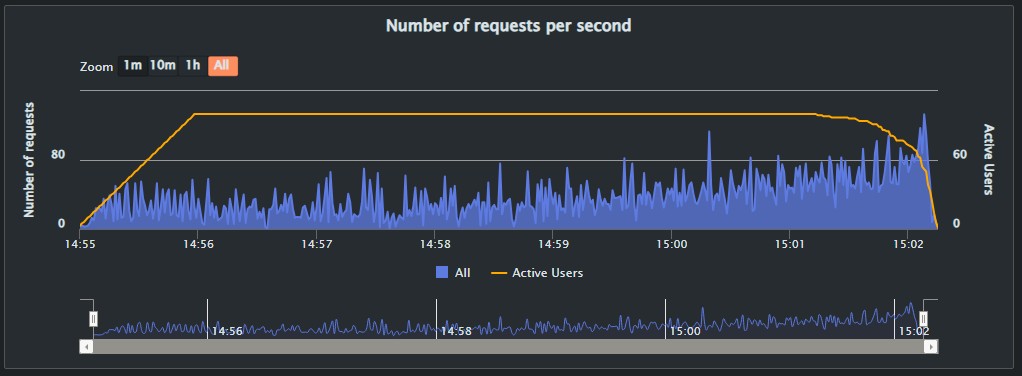

For the monitoring part of the test, we used Prometheus and Grafana, to check metrics like the requests per second or latency of the app.

In this case, a Docker local deployment was used.

14.1. The results

In our Grafana dashboard we created the following visualizations:

-

Requests per second

-

Avg. latency per method

-

Requests by status code

The results are shown in the graphs below: